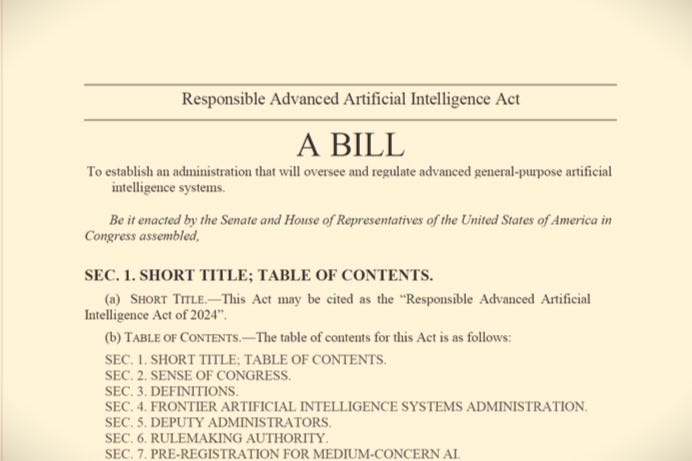

The UNITED STATES RESPONSIBLE ADVANCED ARTIFICIAL INTELLIGENCE ACT

A BILL To establish an administration that will oversee and regulate advanced general-purpose artificial intelligence systems

In 2023, the U.S. Department of State’s Bureau of International Security and Nonproliferation comissioned a report from Gladstone AI, a consultancy with expertise in AI and current member of the U.S. Artificial Intelligence Safety Institute Consortium (AISIC). Gladstone, known for its ties to the U.S. military and connections with Sam Altman’s Y-Combinator in Silicon Valley, completed the report and its companion survey of AI in February 2024. It is vast and comprehensive, and merits several dives to unearth its contents and the plausibility of its national security alarms as a motivator to the U.S. executive, legislature, and diplomatic corps.

Buried within a footnote on page 102 of the report is a link to a full 40-page proposed draft of The UNITED STATES RESPONSIBLE ADVANCED ARTIFICIAL INTELLIGENCE ACT (US RAAIA): A BILL To establish an administration that will oversee and regulate advanced general-purpose artificial intelligence systems. This proposed bill outlines the creation of a new agency, introduces new criminal laws, and sets forth premarket licensing requirements for AI technologies, including those being deployed right now by Microsoft and Google. As a particular treat for lawyers, the complete text of Section 14 on CIVIL LIABILITY, is presented below. Enjoy.

---

SEC. 2. SENSE OF CONGRESS.

It is the sense of Congress that in recent years, artificial intelligence (AI) has rapidly grown more powerful. Computer scientists do not fully understand how AI systems work, nor do they know how to reliably control advanced AI systems. Without additional precautions, we cannot be confident that advanced AI systems will not develop bioweapons, launch automated cyberattacks, manufacture armed drones, or otherwise cause a violent and dangerous catastrophe….

---

SEC. 14. CIVIL LIABILITY.

(a) WHO OWES DUTY OF CARE.—All persons engaged in the development or use of artificial intelligence owe a duty of care to exercise appropriate caution. The duty of care is owed to all persons who are residents of the United States. This duty of care is owed by any person who knowingly owns, possesses, trains, develops, deploys, or uses any of the following—

(1) artificial intelligence,

(2) any specialized hardware that is designed to support AI, or

(3) the model weights for any frontier AI.

(b) DUTY OF CORPORATE PARENT OR SENIOR CORPORATE OFFICER.—A person owes the duty of care described in subsection (a) if that person has both—

(1) the authority to control the behavior of a person who owes the duty of care described in subsection (a), and

(2) actual or constructive knowledge that the person who owes the duty of care described in subsection (a) is violating that duty of care or is likely to violate that duty of care.

(c) OBLIGATIONS UNDER DUTY OF CARE.—Persons who are subject to this duty of care have an affirmative obligation to ensure each of the following:

(1) None of their AI systems cause harm to innocent bystanders, i.e., to persons who are not customers, users, or developers of the AI and who have not maliciously interfered with the AI.

(2) Their frontier AI systems do not escape or spread to third party hardware whose owners have not affirmatively consented to host that frontier AI.

(3) The model weights of their frontier AI systems are not leaked, stolen, or otherwise made publicly available.

(4) Their frontier AI systems are reasonably secure against misuse by third parties, which includes the obligation to—

(A) attempt to identify the most important avenues for misuse of their frontier AI,

(B) monitor their frontier AI for potential misuse, and

(C) upon becoming aware of a third party's misuse or credible threat to misuse their frontier AI, immediately deny that third party access to their frontier AI.

(5) Their high-performance AI hardware is reasonably secure against misuse by third parties, which includes the obligation to—

(A) attempt to identify the most important avenues for misuse of their high- performance AI hardware,

(B) monitor their high-performance AI hardware for potential misuse, and

(C) upon becoming aware of a third party's misuse or credible threat to misuse their high-performance AI hardware, immediately deny that third party access to their high-performance AI hardware.

(d) JOINT AND SEVERAL LIABILITY.—All persons who have violated the duty of care imposed by this Act with respect to the same AI system, high-performance AI hardware, or set of model weights are jointly and severally liable for any violations of that duty of care that contributed to the same harm or to a set of substantially related harms.

(e) PRIVATE RIGHT OF ACTION.—A person, group of people, or putative class who allege specific facts that plausibly suggest a claim for at least $100 million in tangible damages based on a violation of the duty of care established by this section or based on the strict liability established under this section shall have a private right of action and may bring suit for those damages, together with costs of suit and reasonable attorneys’ fees, in any federal district court that has personal jurisdiction and venue under Title 28 of the United States Code. For claims of less than $100 million in tangible damages, this section is not intended to create, destroy, or modify any private rights of action.

(1) QUALIFYING DAMAGES.—Damages are considered “tangible” if they are wrongful death, physical injury or illness, direct financial losses, conversion, the lost value of destroyed or corrupted data, payments made in response to ransomware attacks, or damage to physical property or real estate.

(2) EXCLUDED DAMAGES.—Damages are not considered “tangible” if they are emotional distress, libel, slander, invasion of privacy, consequential damages, loss of goodwill, loss of business opportunities, or violations of intellectual property rights.

(f) PUBLIC RIGHT OF ACTION.—The Administrator may sue any person who violates the duty of care established by this section or who is strictly liable under this section.

(1) CONTENT OF LAWSUIT.—Such a lawsuit may include any of the following—

(A) a request for injunctive or equitable relief,

(B) an attempt to recover damages on behalf of identified victims for distribution to those victims; and

(C) an attempt to recover damages on behalf of the public at large.

(2) CIVIL PENALTY.—If the Administrator is successful in such a lawsuit, the Court shall assess a civil penalty of at least $25,000 and no more than $500,000 per defendant, payable to the Treasury, taking into account the degree to which each defendant has contributed to major security risks and the profit, if any, that each defendant derived from the violation. A defendant who knowingly continued to violate the duty of care may be assessed an additional civil penalty of up to $100,000 per day of the continuing violation.

(3) PROSECUTION OF LAWSUIT.—The Administrator may directly prosecute such a lawsuit, or may refer such a lawsuit to the Department of Justice for prosecution.

(g) NEGLIGENCE PER SE.—In any lawsuit alleging a violation of the duty of care created by this subsection, a defendant shall be deemed negligent per se if any of the following apply:

(1) The defendant was required to obtain a frontier AI permit and failed to do so.

(2) The defendant violated the terms of their frontier AI permit.

(3) The defendant made a material misrepresentation (including a misrepresentation by omission) on their application for a frontier AI permit.

(h) STRICT LIABILITY.—In any civil lawsuit where the plaintiff or plaintiffs have alleged specific facts that plausibly suggest a claim for at least $100 million in tangible damages caused by a frontier AI system, each defendant shall be strictly liable for all tangible damages caused by any event that arises out of or meaningfully relates to that frontier AI system.

(1) SUBSTANTIAL FACTOR CAUSATION.—If strict liability applies, then a plaintiff is not required to prove that a defendant's actions were the proximate cause of the plaintiff's harm. Instead, a plaintiff may prove that a defendant's actions were a substantial factor in causing the plaintiff's harm.

(2) NO REQUIREMENT TO SHOW DEFECT.—If strict liability applies, then a plaintiff is not required to prove that any aspect of a defendant’s AI was defective.

(i) EXCEPTIONS FOR BONA FIDE ERROR.—The provisions of subsections (g) and (h) shall not apply to a defendant who shows by a preponderance of the evidence that any violation of the duty of care established by this section was unintentional and resulted from a bona fide error notwithstanding the maintenance of procedures reasonably adapted to avoid any such error. Bona fide errors include errors that are solely due to clerical errors, arithmetic errors, or printing errors. An error of legal judgment or technical judgment with respect to a person’s obligations under this statute is not a bona fide error.

(j) NO DEFENSE BASED ON OPEN SOURCE.—It shall not be a defense or excuse for any civil liability under this section that a defendant’s role was limited to hosting, developing, fine- tuning, or distributing a product or service that is free, collaborative, or open source.

(k) FORESEEABILITY OF MISALIGNMENT.—It shall not be a defense or excuse for any civil liability under this section that a defendant was unable to foresee the precise manner in which a frontier AI system would become misaligned or unreliable. As a matter of law, persons developing frontier AI are deemed to have foreseen the general possibility that an apparently well-aligned AI system may turn out to exhibit undesirable behavior after being scaled up, more widely deployed, fine-tuned, connected to additional utilities and plug-ins, or otherwise placed into a more dangerous environment. Persons who train or deploy frontier AI are liable for such misbehavior.

(l) PUNITIVE DAMAGES.

(1) WHEN AVAILABLE.—Punitive damages shall be awarded whenever a defendant is held liable for a violation of the duty of care established by this section, if that defendant—

(A) recklessly engaged in misconduct while knowing that this misconduct had the potential to cause major security risks; or

(B) recklessly engaged in misconduct that narrowly avoided causing major security risks.

(2) AMOUNT.—In setting the amount of such punitive damages, a court shall take into account the high value to society of avoiding major security risks, and shall award an amount of punitive damages that is sufficient to deter future violations. In the absence of evidence to the contrary, an award of nine times the value of the compensatory damages shall be considered to balance society’s interest in avoiding major security risks with the requirements of due process.

(3) REPREHENSIBILITY.—A plaintiff is not required to prove malice or oppression in order to receive punitive damages based on this subsection. Instead, a plaintiff may demonstrate that a defendant’s tolerance for major security risks showed complete indifference to the safety of the public and is therefore sufficiently reprehensible to justify punitive damages.