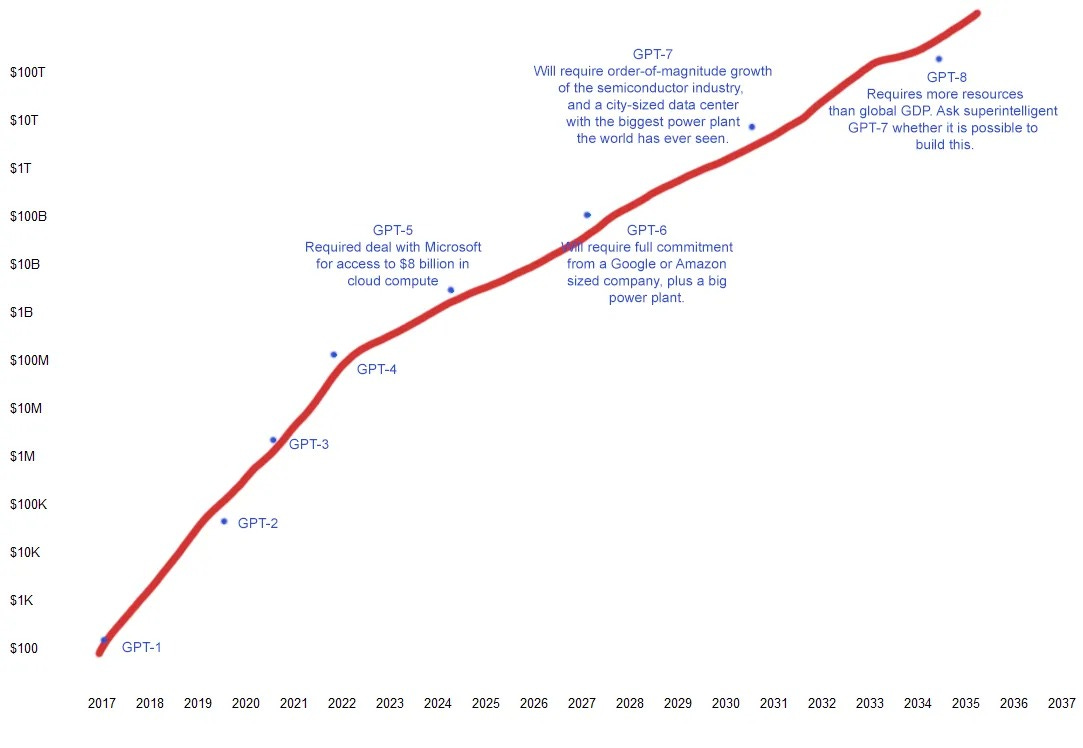

I’m on record that, globally, legislatures will fail to keep up with AI and courts will quickly need to take over on AI law. Two recent stories help to illustrate why. The first is Sam Altman’s hunt for 7 trillion dollars to increase global chip-building capacity and allow OpenAI to continue to build beyond the forthcoming GPT-5 generation of LLMs. The second is Scott Alexander’s reflection on the first story, with some added back-of-the-envelope projections. Here’s what they add up to:

Exponential growth feedback loop

Exponential growth is the general result when a feedback loop meets an abundant source of resources. In this case, the feedback loop is excited investment (and perhaps increased AI productivity). Alexander writes:

The upshot of this is that we’re looking at an exponential process, like R for a pandemic. If the exponent is > 1, it gets very big very quickly. If the exponent is < 1, it fizzles out.

In this case, if each new generation of AI is exciting enough to inspire more investment, and/or smart enough to decrease the cost of the next generation, then these two factors combined allow the creation of another generation of AIs in a positive feedback loop (R > 1).

Exponential growth lasts until the resources run out

The abundant resources in this case are, abstractly: computing power, electricity, and training data. Altman is already hunting for money to extend the excitement by building a lot more computers, but all these resources will run out. Alexander does some math:

Compute: [For GPT-4, over six months] OpenAI was using about 1/2000th of all the computers in the world … GPT-5 will take 1/70th of all the computers in the world, GPT-6 will take 1/2, and GPT-7 will take 15x as many computers as exist.

Electricity: GPT-4 needed about 50 gigawatt-hours of energy to train … we expect GPT-5 to need 1,500 … GPT-6 will need 10 GW - about half the output of the Three Gorges Dam, the biggest power plant in the world. GPT-7 will need fifteen Three Gorges Dams

Data: GPT-3 used 300 billion tokens. GPT-4 used 13 trillion tokens (another source says 6 trillion). GPT-5 will need somewhere in the vicinity of 50 trillion tokens, GPT-6 somewhere in the three-digit trillions, and GPT-7 somewhere in the quadrillions.

Scaling laws predict AI capabilities will also grow exponentially

Projecting out, it looks like GPT-7 may just be too big for any volume of excited investors. But that means the raw intelligence of the biggest models is still going to increase two over two more GPT-generations before things might start to level off: from the current GPT-4 to GPT-6.

Natural language processing and image recognition follow predictive scaling laws. Double either the compute or date in either case, and you reliably get more than double the improvement in language generation or image classification tasks.

As a reminder, in retrospect, GPT-2 was moronic. GPT-4 can write infinite funny haiku, explain inflation, contrast historical periods, reason through word problems, produce working computer code, etc.

Understanding exponential growth is essential here. Change happens faster than we think. Staying on top of that becomes a nimble game.

3-4 more years at this pace

With a huge grain of salt, if the pace of AI model releases observed from GPT-3 to GPT-4 holds steady and no major algorithm breakthroughs occur, we might see GPT-5 potentially as early as 2025 and GPT-6 possibly a year or two after that. Unless Google, Meta, Amazon, or Apple move faster.

Hard law doesn’t move that fast

The EU AI Act is a prime example of law that’s too slow. It was drafted with expert AI systems in mind, before generative AI models existed. Rules governing generative AI models like GPT-4 were crammed into the Act at the final hour. Whether the Act can handle its last minute additions is yet to be seen; but if the next generation of models has unexpected abilities (as they likely will) a revised Act could take years and years to produce.

Double the improved AI capabilities, again and again, in less time than it takes the EU AI Act to complete its compliance timelines? Get ready to go to court.

My vague mental model of policy and law creation is that it's a slow, bureaucratic process due to the volume of information gathering and synthesis it necessitates. 1) Is this a vaguely reasonable take? and 2) Are there examples of quick-reaction regulatory bodies?