OpenAI released a new system last week based on an entirely new AI paradigm.

The ceiling of ChatGPT o1-preview (“o1-preview”) is occasional flashes of genius. This performance is incredibly high, but the floor remains far below human. Access to the 'o1-preview' model is available only through a subscription. You should have this, or get this subscription now, to experiment with this technology in your own work.

Here’s a breakdown of what’s applicable to know as a professional.

The Good

The o1-preview model represents a shift towards AI models capable of general-purpose reasoning. It autonomously corrects errors, refines strategies, breaks down complex tasks into simpler problems, and applies chain-of-thought reasoning to handle intricate problems. It also shows improved performance in formal logic and deep reasoning tasks.

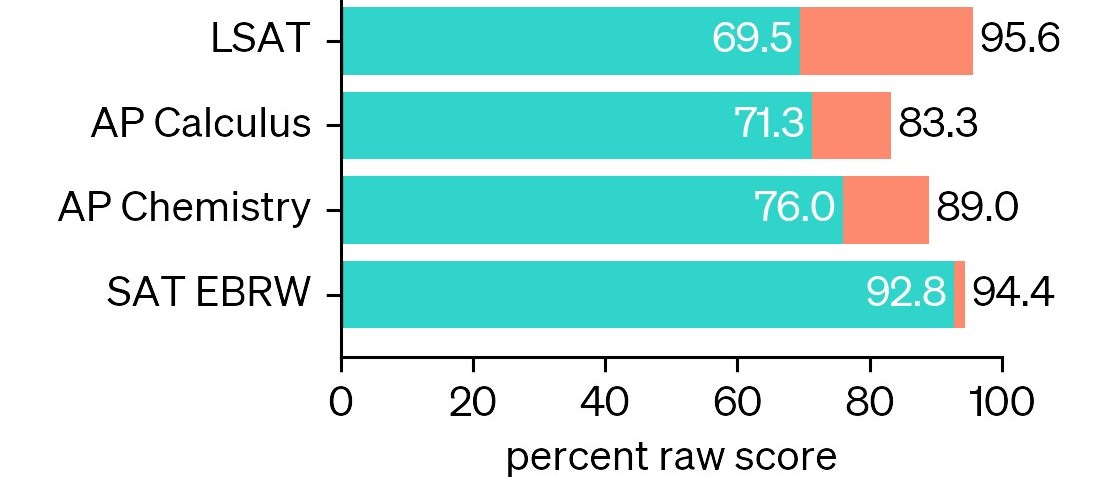

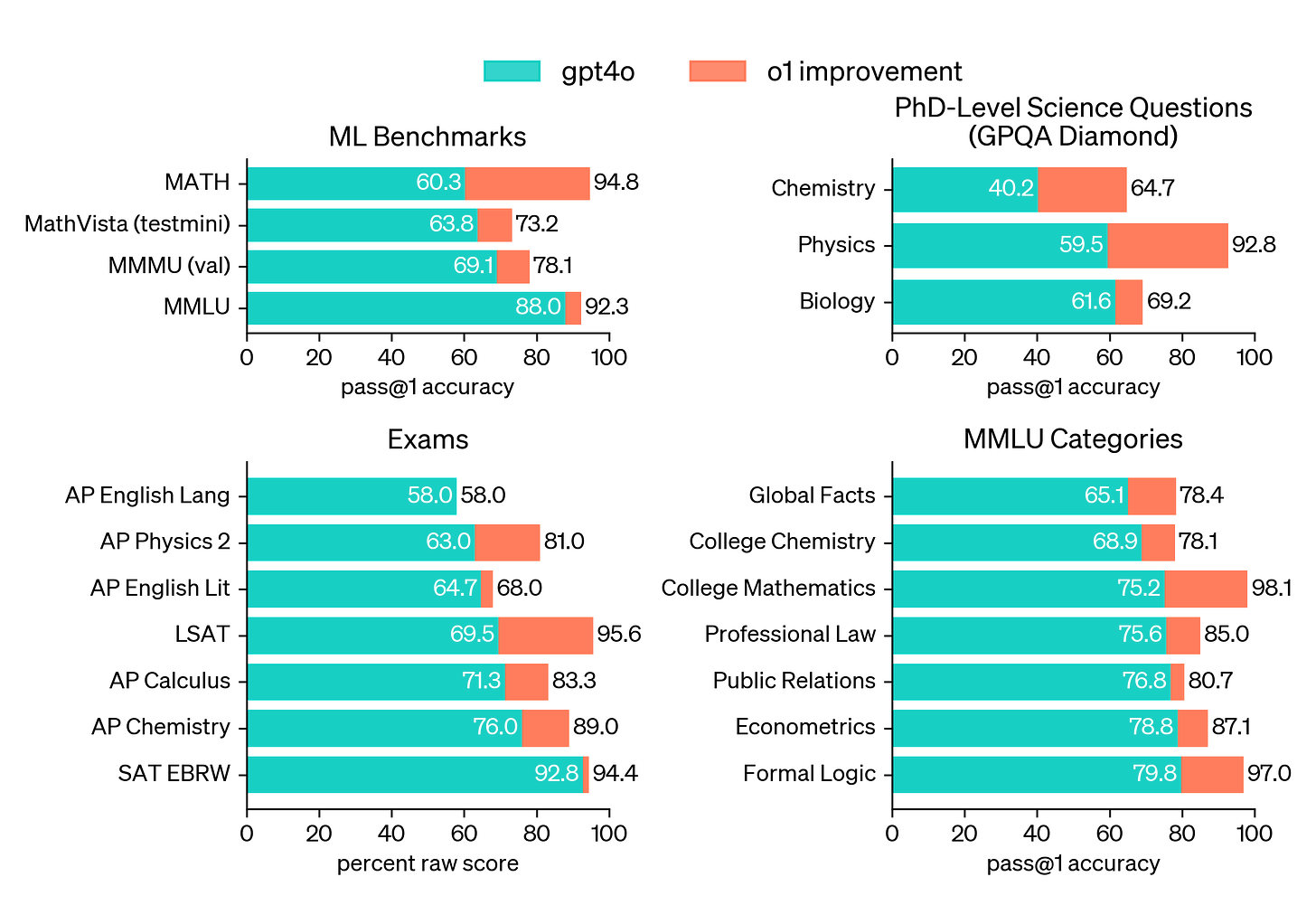

It excels in in algorithmic tasks, formal logic, mathematics, chemistry, physics, crossword puzzles and logic games, medical diagnostics and treatment planning, and reasoning-intensive challenges with performance comparable to skilled human professionals and PhD students on academic-style benchmark tests.

According to UCLA professor and mathematician Terence Tao, the new model displats practical performance at a level comparable to a "mediocre but not completely incompetent graduate student" in mathematics. This assessment has been echoed by academics in related fields. Tao suggests that this paradigm needs to reach “competent graduate student” in order to advance his own research (and to impliedly displace many of his existing human graduate students).

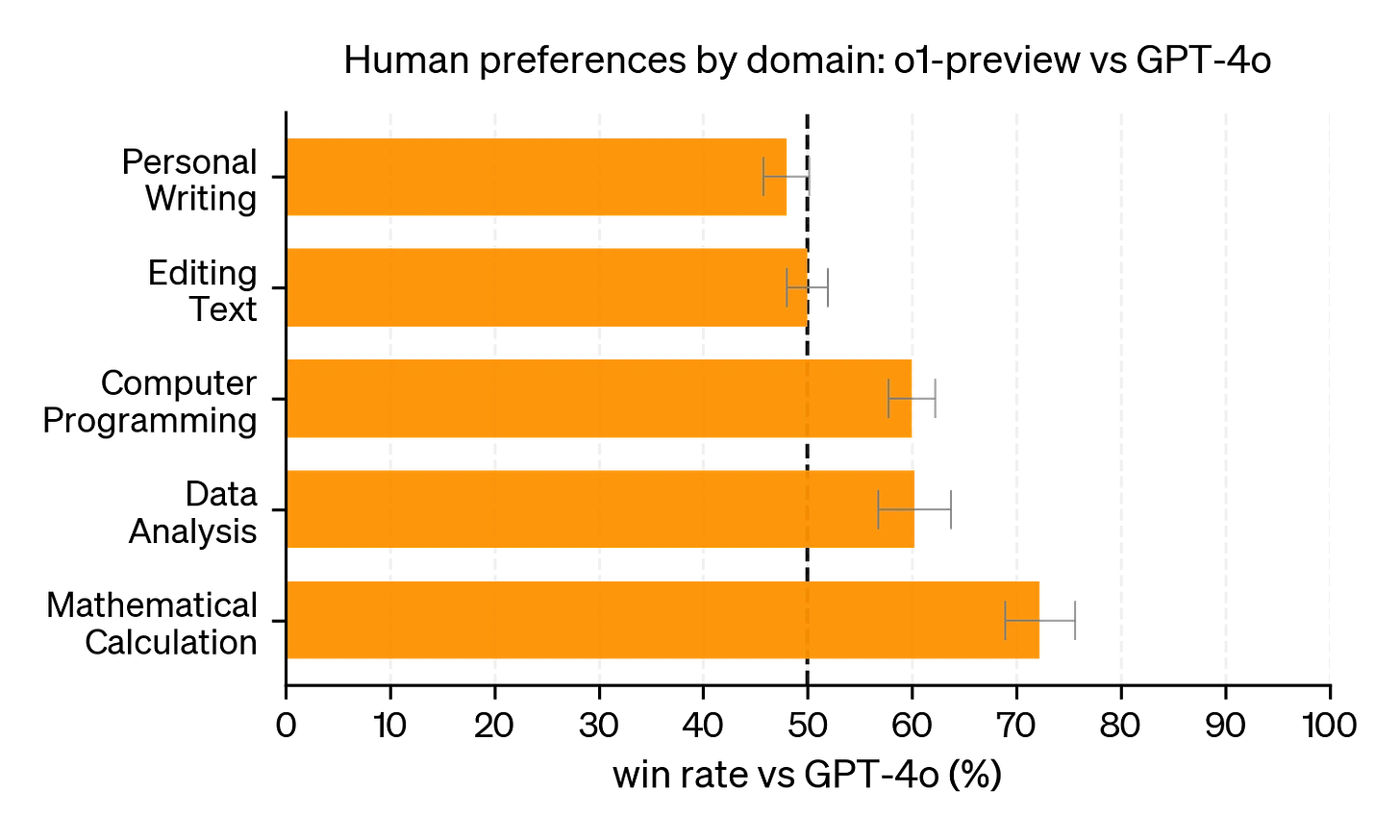

Note that LSAT performance improves significantly with o1-preview, confirming the test's strong emphasis on logic and reasoning.

Applications in the legal profession may range from international tax through international inheritance—essentially any domain with complex logical connections with measurably correct outcomes. (Say goodbye to stressing about the rule against perpetuities.) Experimentation with all kinds of professional workflows are needed to shake out the benefits. You are AI R&D.

The Bad

While not a flaw, we need to be careful not to regard this system as a miracle. The way o1-preview is constructed is entirely new. When given a prompt, it proceeds to sample hundreds or thousands of paths to obtain a solution and uses an LLM to choose the best one. This is not reasoning from first principles. This is sorting through out the absolute best patterns of reasoning from the absolute best of the web and applying it to new problems. It can provide genius. However, fundamentally, it is also still a language model and still makes LLM mistakes.

The improvements in this paradigm apply primarily to domains in which there is a clear correct and incorrect answer. In areas of text editing, there are effectively no benefits; and for personal writing, the existing ChatGPT-4 (or even better Anthropic’s Claude) are still superior to this new paradigm. Perhaps seeking the ‘correct’ writing may hurt the quality of literature.

Finally, effective use of “o1 preview” is going to require a very different sort of well-crafted prompts, especially for complex tasks. This is not the “prompt crafting” of the past two years. Users have reported feeling like they are aiming a powerful problem-solving canon at a problem without being necessarily able to aim with precision. We can only presume that as with the prior LLMs, our aim will improve over time.

The Ugly (Future)

This is a harbinger of new things. OpenAI explicitly wants to create general intellignce that can act autonomously in the world. While current autonomous task completion for o1-preview is not significantly better than previous models, there is a lot of potential for improvement with optimized prompting and scaffolding.

As the name suggests, o1-preview is only a ‘preview’ (likely released as a result of commercial pressures and, more importantly, due to a new investment round). The full system is still in development and may have new capabilities.

This new paradigm also indicates the potential for further advancements without increasing base model sizes. o1-preview only uses expensive compute when answering prompts—rather than massive investments at the start. Therefore, it should be easier to scale up this paradigm than the previous, and we should expect new models based on this paradigm to follow relatively quickly.

That said, increasing the massive investments in based models at the start will also boost performance of these combined systems. o1-preview is based on GPT-4 and not the unreleased—100x larger—GPT-5 (codenamed Orion). Expect something like a “GPT-5o1” (or “5o2”) in the near future.

Finally, o1-preview is not yet, like ChatGPT-4o, multimodel (with images, voice, etc). It’s difficult to even conceive of the possibilities or limitations on the potential for such a system.